Creating the data source

FTP is a protocol for transferring files over the internet. The FTP data pipeline for the Data Warehouse created by Kondado allows you to have access to the data of your files in your analytical cloud.

Adding the connector

To automate FTP ETL with Kondado for your database, follow the steps below:

1) Have your FTP service address, port, username and password handy

2) Allow the Kondado IPs on your FTP server

3) On the Kondado platform, go to the add data sources page and select the FTP data source

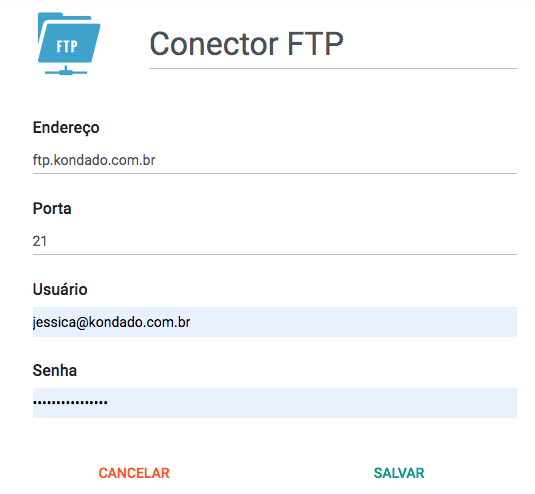

4) Name your data source and enter the information from step (1)

When informing the "Address" parameter, use only the host, as shown in the image - without including "ftp://" or even "/" at the end

Now just save the connector and start integrating your FTP data into the Data Lake or Data Warehouse.

Pipelines

Summary

Relationship chart

Click to expand

CSV

You can indicate the name of a file or even the beginning of the file name and we will integrate all of them.

Once executed, the pipeline will save the highest change date of the files it read and, on the next run, only look for files that have a later change date.

In order to absorb files with different columns, the data will be pivoted on the destination.

Replication type: Incremental or Full (user configurable)

Notes

- Part of this documentation was automatically generated by AI and may contain errors. We recommend verifying critical information

Add the FTP data source on Kondado

Configure your FTP server as a data source to automate ETL into your Data Warehouse or Data Lake.

Gather FTP credentials

Collect your FTP service address, port, username, and password before starting the setup on the Kondado platform.

Whitelist Kondado IPs

Allow the Kondado IPs on your FTP server so the platform can securely connect and read your files.

Select the FTP connector

On the Kondado platform, navigate to the add data sources page and choose the FTP data source option.

Configure connection details

Name your data source and enter the host address without 'ftp://' or trailing slashes, plus the port and credentials from step 1.

Save and start integrating

Save the connector to begin integrating your FTP data into your Data Lake or Data Warehouse for analysis.