S3 (Simple Storage Service) is an AWS service designed for scalable file storage. The data integration from S3 to the Data Warehouse created by Kondado allows you to access CSV files in your analytics cloud.

Adding the data source

Let's consider that you are using a bucket called generic-bucket-name

Step 1: Create a New IAM Policy

- Go to the IAM Console: https://console.aws.amazon.com/iam

- In the left-hand navigation, click on Policies.

- Click the Create policy button.

- Under the JSON tab, paste the following policy document:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "s3:GetObject", "s3:ListBucket" ], "Resource": [ "arn:aws:s3:::generic-bucket-name", "arn:aws:s3:::generic-bucket-name/*" ] } ] }

- Click Next: Tags, then Next: Review.

- For Policy Name, enter

S3ReadListGenericBucketPolicy. - Click Create policy.

Step 2: Create a New IAM User

- Go to the IAM Console again: https://console.aws.amazon.com/iam

- In the left-hand navigation, click on Users.

- Click Add user.

- Enter the user name

S3ReadUserGenericBucket. - Select Access key - Programmatic access.

- Click Next: Permissions.

Step 3: Attach the Policy to the User

- On the Permissions page, click Attach existing policies directly.

- Search for the policy you just created:

S3ReadListGenericBucketPolicy. - Check the box next to the policy and click Next: Tags, then Next: Review.

- Click Create user.

Step 4: Download Access Keys

- After creating the user, the Access Key ID and Secret Access Key will be displayed.

- Download or copy these credentials, as they will not be shown again.

Now, the user S3ReadUserGenericBucket has programmatic access to read and list files from the generic S3 bucket.

Step 5: Creating it in Kondado

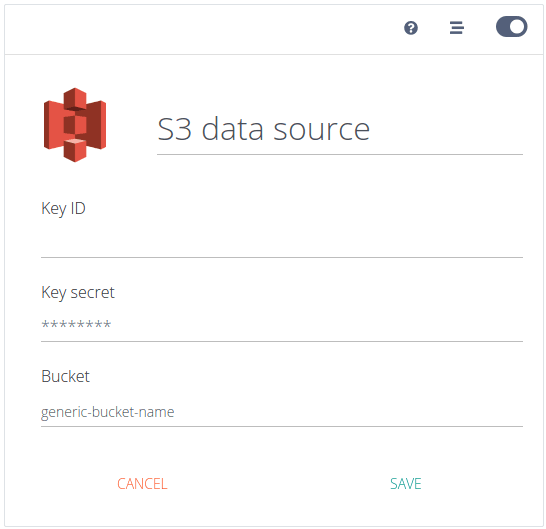

- Access our platform and click on CREATE + > Source > select the S3 data source

- Give your data source a name, fill in the values from Step 4, your bucket name (eg

generic-bucket-name) then click SAVE

Pipelines

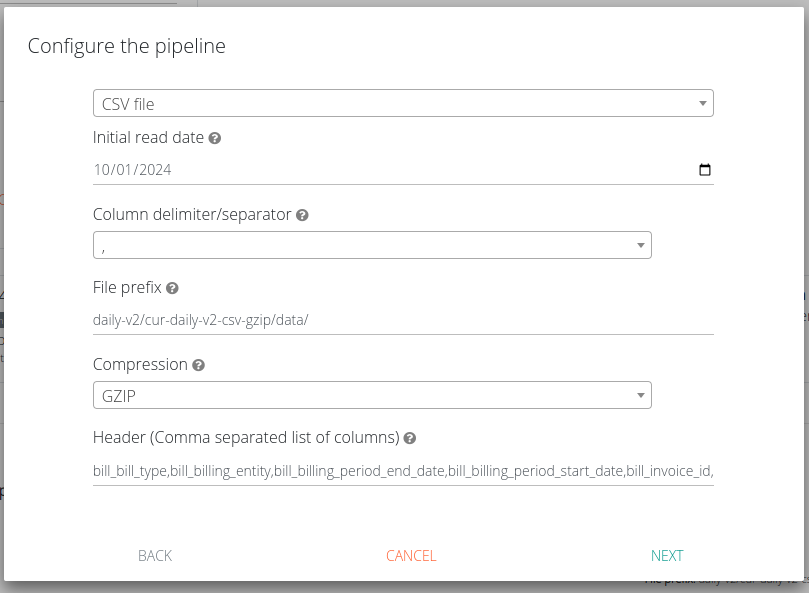

CSV files

The integration for reading CSV files will create a table in your destination where all fields will be of type text, and special characters will be replaced.

All files must have a header.

The following parameters are available:

- Start date of reading: Refers to the modification date of the files. It indicates from which date the data will start being read. If you choose to set your integration as Full, this parameter will be ignored

- Column delimiter: Specify which character is used to separate the columns in the file

- File prefix: Indicate the prefix of the files to be included, do not start with the bucket name or with “/” or “s3://”. Do not use wildcards. Example: folder_x/folder_y/file_prefix_

- Compression: Choose GZIP if this compression is applied to your files, or CSV if there is no compression. If you choose GZIP, your file must have the extension “.gz” or “.gzip” and contain only one file inside the archive. If you choose CSV, your file must have the “.csv” extension

- Header: List the first line (header) of your files. It is not necessary to maintain the order of the columns. If a file has fields that are not in this header, these fields will be ignored. If the file does not contain all the fields in the header, those fields will simply not be read without causing errors. Do not use spaces between fields, only commas. For example: col_x,col_y,col_z

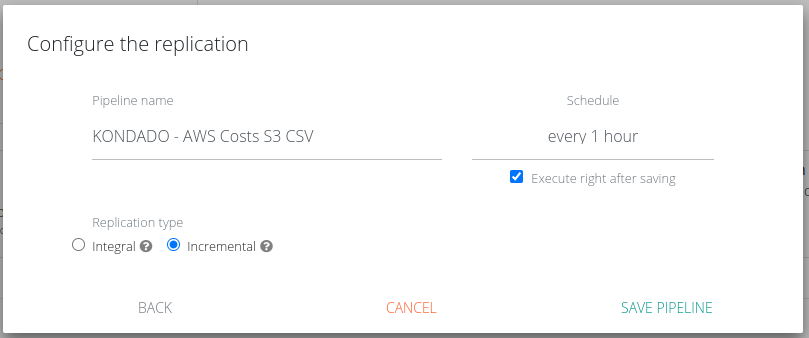

After this step, you will be able to choose the replication type for your integration.

If you choose Integral replication, all files that match the prefix will always be read, and if any file is deleted between one execution and another, it will also be deleted from your table. This will not happen with Incremental replication, that's why the former is recommended in cases where files are deleted (not just modified). Integral replication can increase your record count.

When choosing Incremental replication, whenever a file is modified, it will be updated in your destination. You can locate the file that generated the insertion of a given row and when that row was inserted/modified using the columns _kdd_file_name and _kdd_insert_time, respectively.

Add S3 as a data source on Kondado

Configure AWS IAM credentials and connect your S3 bucket to Kondado for CSV data integration.

Create an IAM policy for S3 access

In the AWS IAM Console, create a new policy named S3ReadListGenericBucketPolicy with s3:GetObject and s3:ListBucket permissions for your specific bucket ARN and its contents.

Create an IAM user with programmatic access

Add a new user (e.g., S3ReadUserGenericBucket) in the IAM Console and select Access key - Programmatic access as the credential type.

Attach the policy to the IAM user

On the Permissions page, attach the S3ReadListGenericBucketPolicy directly to your new user, then complete the user creation process.

Save the access keys securely

Download or copy the Access Key ID and Secret Access Key immediately—they are shown only once and are required for the Kondado connection.

Configure the S3 source in Kondado

In the Kondado platform, click CREATE + > Source > S3, enter your credentials, bucket name, and CSV parameters (delimiter, prefix, compression, header), then save.

Choose your replication strategy

Select Integral replication if files may be deleted between runs, or Incremental replication to track changes via _kdd_file_name and _kdd_insert_time columns.

Frequently asked questions

s3:GetObject and s3:ListBucket permissions on your specific bucket. These should be configured through a custom IAM policy attached to a dedicated IAM user with programmatic access..gz or .gzip extension, provided the archive contains only one CSV file inside./, or s3://. Do not use wildcards. Example: folder_x/folder_y/file_prefix__kdd_file_name and _kdd_insert_time columns. Learn more about data integration options on our platform.